Seven Ways to Deploy Fastly’s Next-Gen WAF in Kubernetes

Sports coaches used to rely on paper playbooks to review strategy with their teams. Now, many leverage the innovation of tablet computers for their speed and flexibility in reviewing key plays and athlete performance. Likewise, DevOps teams adopt new tools and frameworks like application containers and Kubernetes, a container orchestration system, to more efficiently and quickly build and release applications.

Containers, like Docker, package together an application with everything it needs to run consistently in any environment, so developers can release code faster. With the rise of container usage, Kubernetes has become a popular means to automate the deployment and management of containerized applications at scale: According to CNCF’s 2021 Cloud Native Survey Report:

A record high of 96% of organizations are either using or evaluating Kubernetes – a major increase from 83% in 2020 and 78% in 2019.

93% of organizations are using or plan to use containers in production (up slightly from 92% in the prior year’s survey).

More than 5.6 million developers are thought to be using Kubernetes today.

At Fastly, we recognize the need to provide flexible installation options to customers leveraging Kubernetes. In a prior blog post, we detailed the steps on one way to use the Fastly Next-Gen WAF (powered by Signal Sciences) with Kubernetes to autoscale Layer 7 security for your web applications. In this post, we’ll outline a comprehensive list of ways to install Fastly Next-Gen WAF with Kubernetes.

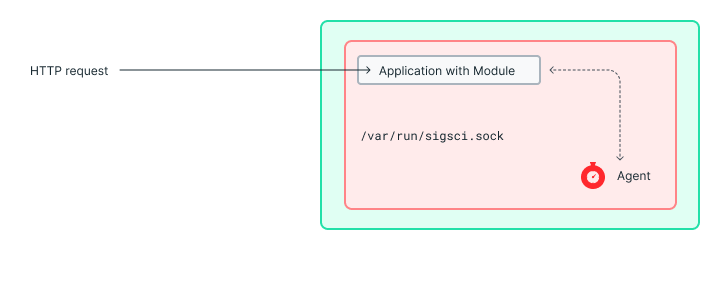

First, a quick refresher guide to our software components: we built and patented communication between an agent and a module that operate on customers’ infrastructure and communicate with our Cloud Engine out of band to receive updated, enhanced intelligence. The module is not needed when Fastly’s Next-Gen WAF runs in Reverse Proxy mode, which you’ll read about below.

You can think of our deployment options in a 3×4 matrix: there are 3 “layers” where you can install Fastly’s Next-Gen WAF in Kubernetes, and 4 “methods” of how you can deploy.

Layers

We’ll work our way from the top of the stack down to the application layer with reasons why you’d choose one versus the other based on how your unique organization manages software deployment.

At the top is the Kubernetes ingress controller. Key reasons for choosing this option are if you a) have an ingress controller and b) have a team that wants to centralize the security of all apps residing below/behind the ingress controller.

Second is the middle-tier or service layer, which you’d choose if you a) don’t have an ingress controller or b) are running another load balancer to front traffic but don’t want to install there for any given reason (even though Fastly Next-Gen WAF can install).

Third is the application layer which you’d choose in order to enable application teams to control the install themselves.

Methods

Within each of the layers described above, you can deploy Fastly’s Next-Gen WAF in conjunction with Kubernetes in any of the following four ways:

Run the module and agent in the same container.

Run the module and agent in separate sidecar containers.

Run the agent in reverse proxy mode in the same application container.

Run the agent in reverse proxy mode in a sidecar to the application container.

With three layers and four deployment methods, the flexibility that containers and Kubernetes offer becomes readily apparent with 12 install options. While Fastly Next-Gen WAF supports all 12 options, we highlight examples of the starred methods in the table below:

| Install Method: | Layer 1: Ingress Controller | Layer 2: Mid-Tier Service | Layer 3: App Tier |

|---|---|---|---|

1. Agent + module in same app container | ✓* | ✓ | ✓* |

2. Agent + module in different containers | ✓* | ✓ | ✓* |

3. Agent in reverse proxy mode in same container as app | ✓ | ✓ | ✓* |

4. Agent in reverse proxy in sidecar container | ✓ | ✓* | ✓* |

* Installation method example provided below.

Method 1: Pod Container – Agent and Module

Here you can deploy the agent and module in the same Kubernetes pod container. The agent and module sit in line with the application.

Method 2: Multi-Container – Agent and Module

This option separates the module and agent. The module would be deployed with the app in a single container, and the agent is deployed in a separate container. Even though the module and agent are in different containers, they’re both in the same Kubernetes pod. The agent and module containers share a volume to communicate via domain socket.

Method 3: Pod Container – Agent in Reverse Proxy Mode

In the event the Fastly Next-Gen WAF agent runs in reverse proxy mode, it can sit in the same pod container as the application. Similar to option 1, Fastly Next-Gen WAF sits in line with the application, but the only difference is the module configuration.

Method 4: Pod Multi-Container – Agent in Reverse Proxy in Sidecar Container

Similar to Option 2, the agent is deployed in reverse proxy mode without a module. The difference is the agent is in a separate container from the application. The request will go through the agent container before allowing it to the application container. The agent and application containers co-exist in the same pod.

For layers 1 and 2, the ingress service is leveraged. Unlike traditional WAFs that require individual rule-tuning for each application that sits behind the ingress service, Fastly’s Next-Gen WAF requires none of that overhead due to our dynamic detections using our proprietary SmartParse methodology.

Layer 1 Method 1: Ingress Pod Container – NGINX or HAProxy

This option allows for deployment of the module and agent in the same container as the NGINX or HAProxy ingress pod. The module would sit on NGINX or HAProxy, while the agent is in the same container. All requests go through the ingress pod and autoscale as needed. The application pod exists behind the ingress pod.

Layer 1 Method 2: Ingress Pod Multi-Container – NGINX or HAProxy

Similar to Option 6, with the only difference being that the module and agent are in separate containers within the same ingress pod. Just like Option 3, both the agent and module containers will have to share the domain socket volume. This deployment method protects Kubernetes clusters from exposure to the open internet.

Layer 2 Method 4: Multi-Service – Agent in Reverse Proxy

This deployment option runs the agent in reverse proxy mode and in a separate pod from the application. The agent pod receives and inspects the request, and blocks or passes the request to the application pod. This allows for autoscaling the agent or application separately.

Ultimate Flexibility to Secure Applications and Microservices

With Fastly’s Next-Gen WAF, you have ultimate flexibility to secure your applications and microservices quickly no matter how your DevOps team leverages containers with Kubernetes. These installation examples allow our customers to protect their apps on day one while ensuring that their applications will remain secure in the future, even if their app deployments with Kubernetes change.